Dataset Showcase

Stereo Camera Assembly

Two Canon EOS 6D full-frame DSLRs mounted on a precision stereo rig capture synchronized 20 MP image pairs. A zoom-lens system enables systematic variation across 10 focal lengths (28–70 mm) and 5 aperture stops (f/2.8–f/22), reproducing the full optics space of professional DSLR photography.

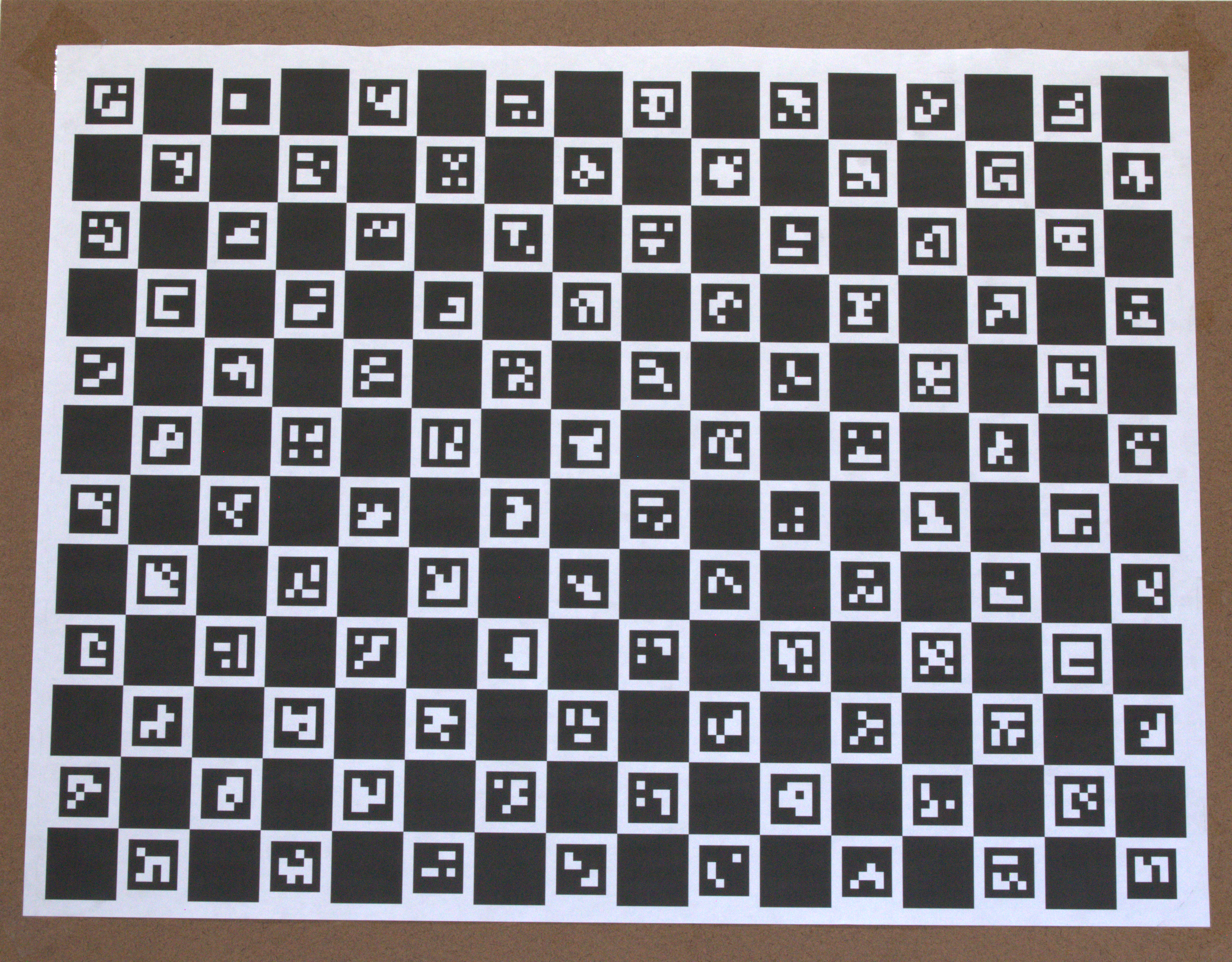

ChArUco Calibration Pattern

A ChArUco board enables sub-pixel-accurate intrinsic and extrinsic calibration. Besides a global calibration set, each scene and each focal length has a dedicated calibration set — supporting both classical (OpenCV) and learning-based calibration methods for stereo geometry recovery across every optical configuration.

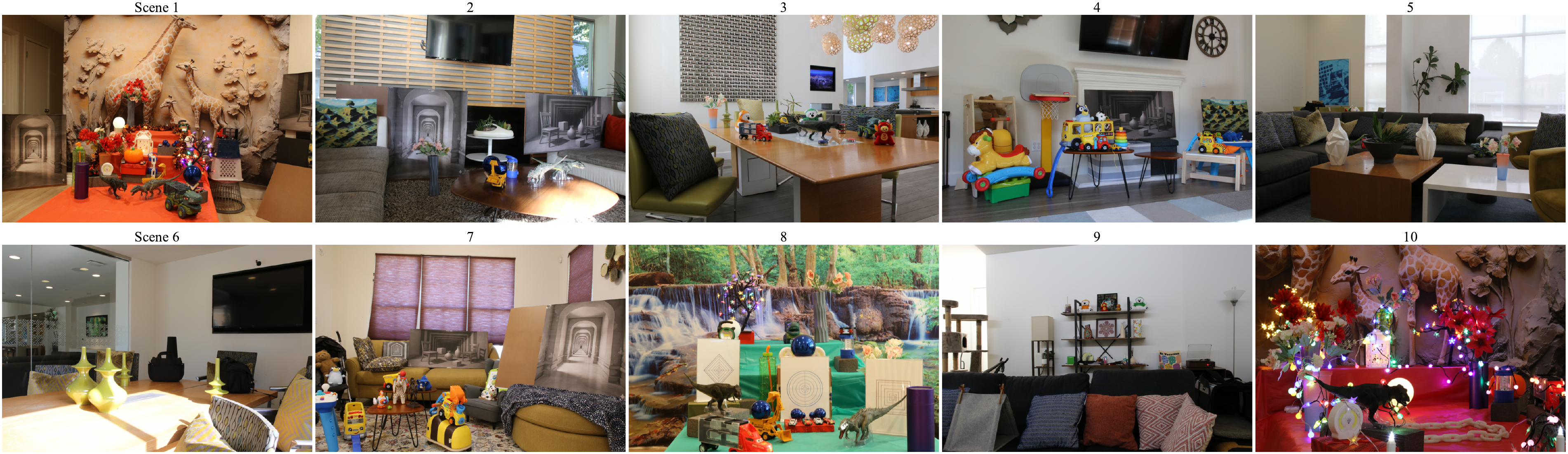

MODEST Dataset Overview

For 10 scenes with varying scene complexity, lighting, and background, images are captured with two identical camera assemblies at 10 focal lengths (28–70 mm) and 5 apertures (f/2.8–f/22.0) — 2,000 images per scene, 20,000 total. Challenging elements include multi-scale optical illusions, reflective surfaces, transparent glass doors, sharp lighting changes, and ambiguous background depths. Dedicated calibration sets per scene and focal length support classical and learning-based intrinsic and extrinsic calibration methods.

Scene Diversity & Challenging Elements

Scenes are intentionally curated to stress-test vision algorithms: reflective surfaces that mirror the environment, semi-transparent glass doors, intricate fine-grained textures, and high-contrast point lights.

Multi-Scale Depth Illusions

Scenes include objects at multiple scales that generate ambiguous, competing depth cues — foreground elements that appear far, and distant backgrounds that visually anchor to the near field. These configurations expose systematic failure modes in learned depth estimation and stereo matching pipelines, revealing where model priors break down on real, high-resolution optical data.

Depth of Field Effects

Systematic aperture variation from f/2.8 to f/22 produces a wide continuum of depth-of-field effects. Wide-open apertures yield rich foreground/background bokeh; stopped-down apertures maintain near-full scene sharpness — enabling rigorous, controlled evaluation of shallow DoF rendering and defocus deblurring models under identical scene and illumination conditions.

Multi-Viewpoint Coverage

20 stereo viewpoints are captured per focal configuration per scene. The 5 shown above illustrate spatial diversity — spanning a range of left , middle and right camera positions with varied subject framing, providing strong geometric constraints for stereo matching, multi-view consistency analysis, and novel-view synthesis. ↔ Scroll to explore

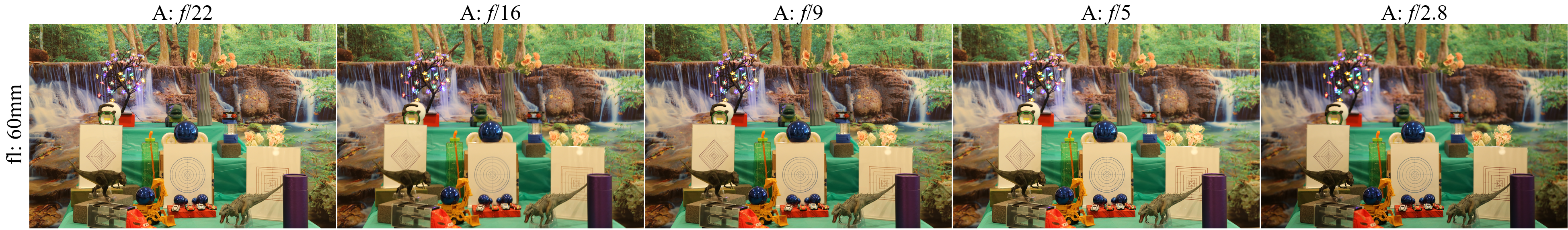

Aperture Variation per Focal Length

At each of the 10 focal lengths, all 5 aperture stops are captured — from wide open (f/2.8) to fully stopped down (f/22). Shown here at 60 mm, the transition from extreme subject-isolation bokeh to near-infinite depth of field illustrates the rich optical parameter space systematically covered by MODEST. ↔ Scroll to explore